|

We usually hear the expression "value added" associated with the amount of enhancement and aggregation of materials and labor that a company applies to its products during manufacturing. However, adding value to products and services is only one element of creating value to customers and shareholders. The other important element is “separation”. Both Lean and 80/20 use separation as a way to purify processes (just like in chemistry) and deliver more value. Lean principles are based on value creation from the customer’s point of view. As you identify the work steps, you eliminate barriers that prevent a smooth production flow. Continuous flow is characterized by rhythm or “takt time” and is dictated by customer demand (pull). Throughout the process, you isolate and remove steps that do not add value or create waste (“muda”). In other words, you must have a free-of-interferences, undisturbed, customer-focused process to achieve lean. You need to separate waste from value-adding steps. Similarly, the 80/20 Business Process places an ultra-high focus on select groups of customers and products, avoiding distractions to the core work. The extreme focus helps capitalize on natural imbalances, implied by the 80/20 Principle. The separation part keeps the complexity created by the “trivial many” from contaminating the “vital few”. It turns the “eighty” into a center of attention. Separation is what keeps the “chaff away from the wheat”. It’s an important management concept and is applied to create focus, as well to impart autonomy. It’s not meant to build walls and create isolated fiefdoms. The main reasons to apply separation are:

Managers should understand (and make up their minds about) what is important and what is not. At a minimum, they should select the core segments to compete, the key customers to partner, the central products to lead with and the fundamental business processes to deliver value. Once they select the vital few, managers can separate and nurture these core areas, by asking the following question: how to increase the separation degrees between the core and the rest? To separate a core market segment from all others, for example, you can start by creating a dedicated sales and marketing organization for that segment. You can also set up distinct production lines and generate a “core segment P&L” to monitor progress. These are all part of what I call the first separation stage or degree. It’s one framework to build centers of attention around core activities. Add another separation degree and create autonomous business units, focusing on the core segments. The third stage is to operate with completely separate and autonomous companies, such as Berkshire Hathaway and most private equity firms do. Separation stages are closely coupled with the way companies grow. Stage three companies grow like a forest, spreading out and diversifying. Stage one companies bloom and expand like a shrub. In the same vein, we can say that stage two companies grow like trees, or they branch out into new market segments. Forests grow in fractal ways, or in patterns that repeat themselves at different scales (self similarity). We can see the resemblance of a forest to stage three companies, diversifying into different segments and industries, while keeping the value-creation model intact throughout different companies (self similarity). Call it satellite companies, divisions, business units, focused plants or service centers, the distributed and decentralized organization is better prepared to deliver value over the long haul. If executed with methodologies such as lean and 80/20, separation and decentralization have the potential to transform the business into a network of value-creating nodes. The benefits of this operating model are reflected in this quote from the 1979 letter from Warren Buffett to investors, which talks about his faith and confidence in the independence and empowerment of business units. The thinking can be summarized in this statement: “If you love the management, set them free”. “To the Shareholders of Berkshire Hathaway Inc.: Your company is run on the principle of centralization of financial decisions at the top (the very top, it might be added), and rather extreme delegation of operating authority to a number of key managers at the individual company or BU level. We could just field a basketball team with our corporate headquarters group (which utilizes only about 1,500 square feet of space). This approach produces an occasional major mistake that might have been eliminated or minimized through closer operating controls. But it also eliminates large layers of costs and dramatically speeds decision-making. Because everyone has a great deal to do, a very great deal gets done. Most important of all, it enables us to attract and retain some extraordinarily talented individuals—people who simply can’t be hired in the normal course of events—who find working for Berkshire to be almost identical to running their own show. We have placed much trust in them—and their achievements have far exceeded that trust.” [i] Some of the best performing companies in the world are decentralized: Berkshire Hathaway, GE, ITW, J&J and many others. They’ve evolved from conventional and monolithic structures to networks of companies and business units, while keeping their original values intact. The leaders realized early on that they needed to move from the “top of the hierarchy to the center of the network”. They went from “command and control” to “influence and connectivity”. They fostered segmentation and enabled value-creating nodes in the network to grow and to multiply. But let’s not get lost in the debate about centralization versus decentralization. You don’t need to wait until you are completely decentralized to start applying separation, as a management principle. You can start right away by selecting core areas and deciding how many degrees of separation to apply. The separation mentality can at a minimum be applied to customers, products and process, as shown in the table below. The key when applying separation to customers is to identify and place an extreme focus on the few customers that account for eighty percent of sales and margins, as well as on the few “twenty” ones that have strategic value to the business. Know everything there is to know about your “eighty” customers. Walk in their shoes frequently to understand their pain points and needs, and involve them in your product innovation efforts. The degrees of separation amongst customers start with sales and support differentiation, passing by the application of unique commercial policies, all the way to rechanneling and firing “twenty” customers, if necessary.

Many companies already treat their “eighty” customers special. At least they should. But the vast majority doesn’t clearly define the borders between core and the others, lacking defined ways to create separation, such as dedicated account teams and unique commercial policies. All customers need to be treated fairly, but treating all customers equally can lead to disaster. Stage three companies have separate value chains that align distinctly with “eighty” and “twenty” customers. The value created by each separate chain is proportional to what they deliver and to the complexity (overhead) each business has to live with. In most cases, customers are clearly told the difference between these different chains and appreciate getting a fair value for what they are buying. When it comes to products and processes, there are many ways to create separation. Starting from an extreme focus on “eighty” products, all the way to investing in separate businesses to distinctly deal with core and non-core. It means separating the mainstream, with less variation, from the infrequent products, which have greater complexity. Detached businesses have different value chains and are uniquely positioned in the market. Another separation technique is to outsource non-core products to specialized suppliers. Outsourcing makes it simpler to place a value on these items. The costs to make them available to customers come listed in the supplier invoice, while the internal costs of low-volume or specialty products are not always accurate, due to hidden complexity. The first separation degree at the shop floor is to distinguish the production methods between core and non-core. The “eighty” needs to be made in the most efficient way possible. This can be attained with lean manufacturing techniques, such as one-piece-flow, takt time and pull systems. A separation method used in lean, is to uncouple customer demand (pull) from orders to suppliers, improving production flow and rhythm. At a higher degree, companies use 80/20 techniques, such as inlining of high-volume products and MRD (market rate of demand) to further optimize and simplify production of the “eighty”. 80/20 also separates period costs from variable costs, when building products, improving the accuracy of contribution margins. Third separation degree is achieved by investing in discrete business units, or separate companies, to manufacture the core away from the low-volume or specialty. By having isolated plants and P&Ls for low-volume and specialty, it’s possible to understand the real cost of complexity, thus pricing correctly in the market. As the business expands, it will segment further and separate more complexity away from the core. But when we carve out a new business, plant or production line, it’s important to consider its ability to add value as a stand-alone unit. In order to do this, I have four types of questions that I ask the team and myself, which I consider the pillars for creating a new node in the network:

To finalize, I believe that most companies know the difference between core and trivial businesses. The problem is that managers don’t take steps to separate them, allowing the trivial to contaminate the core. The word “focus” is constantly used (or misused), but in many cases, there is no action behind it. What does it mean to increase focus? What is needed? Separation works because it forces the opportunity to stand out. It places a new visible node in the value-creation network. So let’s put some meaning behind the word “focus” and enhance value-creation not only by adding parts and labor to our products, but also by simplifying our business and putting some distance between what makes it great and what makes it go. [i] Warren E. Buffett, 1979 letter to Berkshire Hathaway investors (published March 3, 1980), http://www.berkshirehathaway.com/letters/1979.html - Retrieved on October, 2015.

3 Comments

There is a better way to create plans using business analytics (BA) and 80/20. Organizations of all sizes should take steps to explore the wealth of information available and commit to data-driven planning and decision-making. They should also rethink the yearly budgeting process, to make it more adaptive and analytical. The goal is to have a leaner and more robust process, delivering greater accuracy and agility. Companies create budgets or annual operating plans (AOP), with a large focus on financial targets. For the most part, they aim to reach strategic milestones and close gaps. But typically, they end up with plans that emphasize negotiated objectives and boundaries, with a lot less focus on optimization and transformation. Budgets by and large, become a financial exercise in compromising. Jack Welch (former Chairman of GE) calls the budgeting process “the most ineffective practice in management”. He goes on to say that the budget process is an “exercise in minimization. You’re always getting the lowest out of people because everyone is negotiating to get to the lowest number”. Accuracy, relevance and execution ability are the greatest weaknesses of typical annual plans. During preparation, budgets consume too much of management’s attention and can become irrelevant very quickly as conditions change (and they always do). Budgets are also known to be fixed and inflexible. To make it worse, most companies tie executive compensation to performance against the budget and not against previous years or industry benchmarks. Not to mention that a huge amount of effort and time is placed on budget preparation and data gathering. In fact, more data gathering than analyses. Plan iterations are usually the outcome of negotiating sessions amongst managers, as opposed to teamwork and analysis of contextual information than leads to foresight. Managers spend too much time bargaining and setting boundaries, and not enough time planning for execution. No wonder many companies fail due to lack of effective operating and strategic plans. The solution is to create an adaptive and analytical performance management process. Instead of cramming the entire exercise into annual planning seasons and quarterly or semi-annual reviews, companies should uncouple forecasting and financial analyses from planning itself, and make them ongoing activities of the organization. Once you uncouple them, what is left for the planning season is work that is more prescriptive (how to make it happen), as opposed to data gathering and number crunching. The year-round activities (reporting, analyses and forecasting) receive a large dose of intelligence using BA, while the once-a-year activity (planning) is redefined to focus on more prescriptive planning. The benefits are more adaptability, accuracy and relevance to the organization. Once you uncouple the planning elements, you end up with three parts:

Step two above (rolling forecasts based on analytics) is key to create an ongoing cycle of planning, execution and evaluation. It will also add intelligence to the process by tying the forecast to key business drivers. These two elements will make the company more agile and dynamic in the planning process. Rolling forecasts coupled with analytics, reduce uncertainty in planning and forecasting while encouraging constant assessment of the plan throughout the organization. A good example of a company that benefits from this approach is Southwest Airlines. Given the volatility in the airline industry, they’ve opted for a 12-month rolling forecast and quarterly planning. This approach has worked very well for the company, which has been consistently profitable for over 30 years, in a very tough industry. It’s also important to blend actual performance with analytics, to succeed. Forecasts need to be created rapidly and with less complexity and cost. Therefore it’s important to structure the data and the analyses, providing a decent level of automation. Bear in mind that analytics are not the sole responsibility of the IT department, just as budgeting is not an exclusive responsibility of the finance department. BA is a capability that needs to be developed by all functional areas and business units, while IT provides access to data and analytical tools. Typical areas to apply predictive analytics are as follows: Predictive analytics is by no means exclusive to e-businesses and is already penetrating brick and mortar manufacturing and distribution companies. Data is easy to collect and to access. The millennial generation, or the tech savvy generation, is taking over. New and more cost effective tools are available and companies are evolving quickly to more advanced levels. A few leading edge firms are already using prescriptive analytics. So allow me to take a moment to explain the different types of analytics. The first type is known as descriptive, and is used by the majority of companies, in more or less structured ways. It is done with standard and ad hoc reports and financial analysis. They look at history and tell you what happened to the business, how often it happens and derive actions to fix problems. Companies have different ways to extract insight and to diagnose the reasons why something happened. Management uses KPIs and other indicators that point to root causes and provide clues about potential actions to stop a problem from happening, and to improve the results. But keep in mind, this is all looking at the rear-view mirror and explaining what happened. While this approach can provide reasonable insight, the knowledge gained is all in hindsight and the future depends solely on critical analysis and management experience. The second category of BA is known as predictive analytics. It uses data mining techniques to look for hidden patterns and correlations that may exist in contextual data, in order to derive predictors that will point to the future. Today, e-commerce companies are the primary users of these tools. However, more companies are waking up to the power of predictive analytics. On top of the performance improvement potential by this method, there is an incredible opportunity for manufacturers to improve the longevity and reliability of products and production lines, for example, using predictors for maintenance and early product failure detection. The most advanced type is prescriptive analytics. It’s used to find the best courses of action for a given situation, feeding from both descriptive and predictive types. It’s a natural evolution for companies that are consistently mining and analyzing data, and trying to establish correlations and trends. It presents you with actionable options to develop data based optimization plans. Prescriptive analytics is an evolving field and advanced techniques are being tested, including the use of artificial intelligence software, to use both structured and unstructured data elements. You can start applying the prescriptive mindset right away, through mining the data that is already in your servers. In fact, 80/20 analytics is based on simple ways to evaluate correlated sets of data, such as products and customers, to arrive at optimization strategies. The picture below shows the three areas required to develop an adaptive planning process using business analytics. Many predictors are similar to KPIs used by managers. The difference is that most KPIs are set based on historical analysis and goals, while predictors are set based on data analytics, for relevance and insight. They allow you to set smart goals, while keeping the organization focused on fewer issues. Although Google Analytics is a tool for online businesses, it’s a good example of how to use prescriptive analytics. Google offers users a Prediction API (application program interface) that automatically provides pattern matching and machine learning capabilities. After it learns from a set of training data, the API predicts future trends, detect changes in usual patterns and recommend actions. It does amazing things with remarkable accuracy, like predicting spending patterns by online shoppers, customer attitude towards a certain brand, based on positive or negative feedback, and so much more. I believe such tools will become mainstream, at all types of industries, in the very near future.

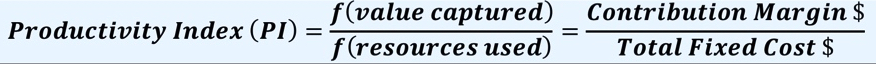

While both predictive and prescriptive analytics are often associated with big-data and sophisticated algorithms, the basic principles behind these tools are really down-to-earth and useful to managers trying to focus on what is important (the “eighty”), mitigating risks and knowing which levers are most effective, in case they have a problem during execution. Planning with 80/20 analytics requires that we spend time upfront analyzing contextual data and identifying the few key pressure points and optimization actions. Let me give you a simplified practical application how a distribution business can improve its profitability outlook by applying adaptive planning and analytics. Like manufacturing companies, distribution companies want to improve the return on invested capital (ROIC) or, at a minimum, the return on assets (ROA). ROIC is a profitability ratio that tells us how good a company is at turning capital into profits. Companies want ROIC percentage to be attractive, so investors can continue to allocate capital. ROA is an indicator of how profitable a company is relative to its total assets, and a subset of ROIC, but mainly useful for distribution businesses, since most of the investment sits in the inventory part of the assets. In order to improve these metrics, there are two strategies available for the business: 1) to increase return on sales in terms of EBIT (earnings before interest and taxes) or 2) to turn its assets faster (or reduce the assets applied). Based on our experience, a reduction in assets (in terms of inventory and accounts receivables) or invested capital (CAPEX), has to be way beyond normal (or even healthy) levels in order to significantly impact ROA and ROIC. They can impact free cash flow positively in the short run, but will not necessarily make the business better. The other way to do this is to improve the fitness level of the company, by increasing the efficiency reflected in the Productivity Index (PI), which was discussed on a previous article. PI is the ratio between contribution margin dollars and overhead dollars. The crux of the issue is to know which levers are most effective to increase ROIC (pricing, overhead cuts, inventory reduction, etc.), while continuing to march towards profitable growth and value creation goals. In other words: what is the art of the possible without damaging the business? Through the application of business analytics that is connected with a rolling forecast, companies can arrive at an optimization plan that makes these apparently conflictive goals work together. In most cases, distributors find the following contextual data sets to be the most useful: markets and sales, customers and products, product lines and suppliers, contribution margins and overhead, inventory and contribution margins. Without a dose of foresight on how certain actions impact the P&L, managers can destroy value. It becomes a costly trial and error process. One of the common mistakes, for example, is to go after additional contribution margin dollars by lowering prices across the board, in order to increase sales (making it up with volume). The problem is that you need sales to increase significantly more to offset the margin reduction from price cuts. It is not a realistic scenario in most cases – it’s a “fools game”. Another common mistake is to underprice the tail end of the product line. In other words, distributors tend to be under compensated for investing in inventory or not to get paid enough for managing complexity on customer’s behalf. Sales analytics can help you avoid these errors, by recommending and predicting the actions’ impact on margins and ROIC. It tells you how much portfolio momentum you have, so you can take appropriate pricing actions. It also performs inventory investment analysis (turns and earns) and recommends a pricing strategy that is different for different groups of products, depending on how much contribution margin they generate and how fast they turn. Based on predictive models, managers can develop viable optimization plans, to improve the business and avoid deadly mistakes. Examples of such actions could include: a) increase prices and contribution margins selectively, using 80/20 analytics to define the different pricing levels for different products; b) scaling down overhead in selective areas of the business, allowing SG&A expenses to increase at a lower rate than sales (for example, below 2% sales increase); c) increase sales in certain market segments, which are projected to have above industry growth rates; d) recommend the optimal parts mix in inventory to maximize turns and earns, based on demand patterns and trends. At the end, the plan is still about setting priorities, improving contribution margins, reducing overhead, optimizing investment in inventory and looking for the best market segments to grow. What’s new here is how the actions can be combined, with the right amount of intensity, to produce maximum results. Could we thrive without a better planning process and business analytics? We could probably survive, but given the need for agility and the pace of change, the ability to react faster and apply analytics are key differentiators in most industries. (Six Trends Impacting Commercial Vehicle Suppliers) If you ask anyone at the “water cooler” of the automotive industry about the future, they will probably recite these top two trends right away: autonomous driving vehicles and advanced manufacturing (a.k.a. Industry 4.0). The experts will possibly add three more changes to take effect in the next 15 to 20 years: electrification of vehicles, connectivity and advanced materials. These five trends are driven by global tendencies, but mainly macro economic ones, such as slowing growth, environmental distress (creating stricter rules), demographic shifts and growing urbanization. While it’s reassuring that everyone shares a similar vision, it’s not exactly clear how these changes are going to impact vehicle OEMs and their auto parts suppliers. More specifically, how they will change the commercial vehicle (CV) manufacturers and their suppliers. And the reason why we should think about the CV industry first, is the high probability that the next autonomous car might be a truck. Trucks have very compelling reasons to receive autonomous driving capabilities before passenger cars do, especially long-haul trucks in US and Europe. The economic benefits of ALHTs (autonomous long-haul trucks) are undisputable: fuel economy, safety, emissions, delivery efficiencies, tackling driver shortage and so on. On top of economic reasons, closed-loop transportation systems will be first to market, since they are easier to maintain control, versus passenger cars. Trucks already have a semi-open architecture for power and drive train systems, using a network of electronic control units (ECUs) over standard data-links. Many of the ALHT’s enabling technologies are in place today and new ones are being tested as we speak. The sensor arrays and number of ECUs continue to grow. Suppliers such as Knorr-Bremse and Wabco offer full stability control systems, lane departure and radar-based anti-collision systems, to mention a few. Trucks were the first adopters of telematics back in the 90’s, becoming a showcase for Qualcomm and for how connectivity can improve overall transportation efficiency. In fact, major suppliers piggyback on telematics to communicate with their products on the go. Many in the industry believe that we are only one or two design cycles away from having convoys of ALHTs going down some highways in US and Europe, in the next 10 to 15 years. Unlike cars, autonomous driving trucks will not require radical changes to their power/drive train technologies to get there. The changes are likely to be less than revolutionary. The modern diesel engine and transmission, for example, are fully capable and very likely to power the ALHTs with some enhancements. Diesel fuel will continue to be cheap for the foreseeable future, even though we might see some electrification as hybrid and power storage technologies develop. So change is coming for the trucking industry and so far all we hear is discussion about product and manufacturing hardware technologies. And although these technologies are important and disruptive, the central question for suppliers is how will these changes impact their business in the next 10 to 15 years. Or how can an existing supplier capitalize on these trends? Based on my observations, I believe there are at least six specific areas that CV suppliers must look at, in order to succeed in a changing CV industry: (1) move up in the supply-chain (tier 0.5 supplier), (2) increase lean and agility, (3) glocalization, (4) new service business model, (5) analytics capability and (6) the millennial generation. Become a Tier 0.5 Supplier Commercial Vehicle OEMs are pressured to meet both the growing need for automation features and cost reductions for competitive and regulatory purposes. The cost of non-compliance is growing bigger every day (remember the recent debacles around International Trucks and VW diesel cars). OEMs are adding new models and features while reducing the number of vehicle architectures and suppliers. This complexity will inevitably influence ALHT’s architecture to become more modular, through more subsystems (modules) designed with interfaces that form similar structures, from which a number of derivative vehicles can be developed, while preserving the identity of each OEM. While OEMs will continue to be the system integrators, Tier 0.5 suppliers will be a step ahead of the Tier Ones in the supply chain, working in close cooperation with OEMs and component suppliers, offering modules with common interfaces and technologies that are aligned with ALHT’s architecture. The design characteristics of the best modules will include simpler interfaces to the truck, energy conservation capabilities, remanufacture ability, data-gathering intelligence, network connectivity, above average reliability and competitive cost. It will take smart and integrative design by the supplier, beyond and above the addition of more ECUs to the product. A module will be more than today’s conventional subsystem assembly, where labor is the main value added to isolated components. Take for example the rear drive axle assembly; composed of an axle, brakes, wheel ends with tires and ABS/EBS controls. Today, these components are sourced separately from different suppliers, by most OEMs, with little integration amongst them. This is the result of an evolutionary design and sourcing mindset created by a multitude of unique interfaces and functionalities, in the absence of modular system architectures for trucks. When you boil down the functionality for this subsystem, the rear end is primarily trying to manage torque between the vehicle and the asphalt, in the most efficient way possible. As the ALHT architecture evolves, it is not difficult to envision that all of these mechanical and electronic components could be optimized to provide higher levels of performance. Smart tires and axle differentials, for example, could share the intelligence embedded in the ABS/EBS controls. Mechanical integration between axle, brake and wheel-end using new materials, could save a lot of weight and cost. Tire pressures could be controlled to optimize tire wear in conjunction with traction and torque control. The rear end module should be capable of adjusting its torque characteristics on the fly, based on road conditions and duty-cycles. It should also be able to ask for help when it senses a problem and requires service, based on usage patterns and prognostics. The possibilities are endless, but I know many people will bring up the commercial barriers for suppliers, to climb up to tier 0.5. And that is true, until the new architecture emerges and the interfaces are better defined for each functional vehicle element. The best example we can relate to is the personal computer. Once the architecture became stable and the interfaces well defined, a whole new industry emerged. Some component suppliers were able to climb up the ladder and evolve into complete subsystem providers, while others were acquired or disappeared altogether in the process. Who is going to be best positioned to supply high-value modules under the new architecture, remains to be seen. There is also the question about how vertically integrated truck OEMs will deal with this change. Are they going to collaborate more with suppliers or are they going to try to become modular suppliers themselves to other OEMs? Would someone like a Google or a Tesla become intelligent modules supplier in the future? Some suppliers are better prepared than others. Eaton, for example, had a good start developing and supplying hybrid power packages to bus truck manufacturers. Cummins did the same in the natural gas space, with engines and fuel systems. They eventually became a supplier of fuel and emissions systems to other engine makers. The important thing is to consider this disruptive change in the horizon and start developing your company’s strategic choices and desired position in the new supply chain. Increase Lean and Agility Lean manufacturing has been around for 50 years now. Still, not every manufacturer has transformed itself with lean. Many have not managed to apply it beyond the shop floor. Suppliers will need one or two more gears, in order to cope with squeezing margins, if they want to grow profitably. Becoming leaner, beyond the shop floor, and increasing agility are imperatives to survive and thrive. And to gain agility, suppliers must reduce internal complexity and keep it under control. It means simplifying supply-chains, de-proliferating product portfolios, optimizing sales and distribution practices and streamlining business processes. Getting leaner beyond the shop floor requires a compelling economic benefit, for most suppliers. Most see the power of lean to eliminate waste in manufacturing, but when it comes to other areas, the enthusiasm is not the same. This is why I believe suppliers need 80/20 or “lean on steroids”. As I’ve written before, lean and 80/20 are “sisters” and they need to work in tandem, if you want to cut waste and become more agile at the same time. Lean is primarily an “inside-out” methodology, while 80/20 is an “outside-in” process. 80/20 starts with the customer and the market. Lean starts with a strategic intent or purpose from within the company and eventually becomes a transformation tool, which impacts the way people think and the way the processes work. Combining lean and 80/20 will increase focus, profitability and reduce complexity. It will sharpen your company’s focus on the market’s sweet spot, simplify your offering and drive complexity out of the critical business processes. It will also create a more decentralized and autonomous organization, capable of faster organic growth. Lean and 80/20 will also lead you to a sensible automation approach for your manufacturing processes, for maximum productiveness and lowest cost, and not just automation and connectivity for the sake of staying in tune with the latest buzzword (Industry 4.0?). Let the market demand drive the need for 3D printers and robots in your high volume production lines and not the other way around. Ease of connectivity on the shop floor is happening anyway! But that is the hardware, while lean and 80/20 represent the software. Adopt Glocalization There are no surprises about the global nature of the CV industry and the need for suppliers to support their OEM customers around the world. But there are different approaches to helping your customers globally. Glocalization can be thought of as another buzzword, but it has a role in our industry. Some times, you must offer different versions of the same product for different markets, but you don’t necessarily need to become more complex and proliferate your product lines to do that. You also have to create product availability close to the customer site, but you don’t necessarily have to own all the means of production yourself. To use another jargon, glocalization means that you think globally (product architecture, supply chain, partnerships) and act locally (product features, cost and service needs). A good example of a company that does this very well is Cummins. While the same mid-range engine platforms (ISB and ISC) are used all over the world, the final products get customized in different markets to meet local competitive requirements and service needs. And production is not carried-on directly by Cummins in every region either. In China, for example, Cummins has a joint venture with OEM customers, such as Foton Trucks. Cummins has “glocalized” production in China, in order to produce the ISB four cylinder engines, with different fuel systems and accessories, which are more adequate for local emissions, cost and service practices. In other markets, Cummins uses a combination of its own plants, JVs and licensees to create availability of “glocalized” products. Normally glocalization happens in two fronts: manufacturing footprint and products. But there is more to it, such as localizing design and maintenance of global product platforms for major developing markets. The growing number of technical centers in India and China are good examples. Engineers work in collaborative manner with their peers in US and Europe, to create new designs and tweak existing platforms. The product design, for a high volume emerging market truck component, should ideally be done in the region. OEMs are quickly learning the lesson and turning more often to suppliers in region, capable of true localization, based on global platforms. Think of BharatBenz in India and Foton Trucks in China, as companies that are adopting a glocalized sourcing strategy. Change the Service Business Model The concepts of vehicle uptime and TCO (total cost of ownership or life-cycle cost) will be top priorities for fleet customers, since they can now operate their transportation system 24 hours a day, seven days a week. Nevertheless, the fact that ALHTs are connected all the time to the network creates a major business opportunity for suppliers willing to innovate on their service business model. Customers will continue to demand a seamless purchase experience for parts and service. They will also want to monitor the health of their vehicles online, using predictors to decide when its time to pull the truck for service, and select strategically located service shops to minimize downtime. Loyalty to the channel (OE or independent) and to the brand of the parts on the box will diminish, as customers pay a lot more attention to TCO and uptime. In fact, end users will care less and less if the parts are new or remanufactured, as long as they get the job done from cost and availability angles. There is a major opportunity in remanufacturing of components, in the broader sense. It is not only more economical but it also makes a lot of sense from an environmental standpoint. And the biggest revolution in remanufacturing has to do with the supplier’s expertise in the areas of electronic salvage technology and reverse logistics. Remanufacturing of electronic components will go way up. The ability to reprogram the hardware with new or upgraded code, and delivering the new software via de network, will be huge differentiators. We are now talking about real-time remanufacturing of products, in some cases. But suppliers will have to dedicate engineering resources to developing new salvage techniques for hardware and software, so they can change fewer parts and have better margins. Develop In-house Analytics Capability The key reasons to be good at analytics are two fold: first, customers will demand that your products and services offer predictive capabilities and two, you will want to use the abundance of information to develop market foresight and optimize your product portfolio. Your products will become a node in the ALHT’s data network. Capturing only miles and hours of operation are OK, but not sufficient to create predictors that you can act upon. Usage and duty-cycle data, operating environment information, product health and performance, maintenance interventions and a host of additional data will become available. Being capable of making sense of the data, to predict the future and to make it happen for you and for the customer will be a huge differentiator in the market. Predictive analytics capability will allow you to get closer to customers and derive usage practices that you can apply for multiple purposes. Predictors will help with early problem detection and service prognostics, which is critical for the operation of ALHTs. No one will want a disabling failure to happen without previous warning, when it comes to autonomous driving. The risk is too high. Suppliers will have to learn these new skills, since most of what they have today is warranty information analysis and service diagnostics, which is only capable of telling “what happened” and “why it did happen”. Current analysis is not geared to point to “what will happen” and “how you can make it happen”. Once these new analytical skills are developed, you will be able to use them to improve performance of your own sales and product portfolio. Suppliers will use analytics to continuously optimize offerings, as product mix will vary even more, for different OEMs and regions. The customer pain points and usage patterns will feed right into the supplier’s product plans and service strategies. There will be less guessing and subjectivity. And without active and objective management of the product portfolio to sustain momentum, suppliers will likely suffer from slow growth and sub-optimal contribution margins. Empower the Millennial Generation Last but not least, CV suppliers need to have their experienced leaders focused on developing and empowering the next generation, before it’s too late. An industry that has traditionally thrived on experience and people’s networking abilities must learn to succeed with tech-savvy and connected people, who don’t necessarily want to stay in the same company for all of their lives. Suppliers will have to adjust to the fact that the Gen Y workers are more inclined to be “employed consultants” who want better flexibility and recognition. The good news is that the new generation is a lot more global and in tune with the trends affecting the commercial vehicle industry. They are likely to see the advent of autonomous vehicles as a natural and needed evolution in the entire transportation matrix. They will want to be challenged and recognized, working in focused business units, with clear KPIs, as opposed to the overly functional global matrix organizations. They will be more like the ALHTs themselves, where the network creates the strength and not any specific node in isolation. Surely there are other things that CV suppliers have to worry about in the next 20 years. And you don’t have to be good at all of these areas to be successful either. But some of these can have a perverse effect, if you don’t pay attention to them. Case in point is the arrival of the millennial generation in the work force. Failure to bridge between baby boomers and the millennials can create disruptive shock waves throughout the culture and distract the organization. Other areas such as glocalization, if poorly executed, can create cost and limit growth in a global CV industry. Lack of innovation to your service business model can cost you a lot of money in customer support and reputation.

Other areas like lean 80/20 and analytics are performance differentiators. The suppliers who excel in these attributes will pull ahead of the pack and make more profits. And Tier 0.5 is the ultimate aspiration and a delighter to customers. If you are able to deliver innovation and value that is aligned with the new truck design architecture, you will have an edge. But only a few of the basic areas are attributes to becoming a Tier 0.5 supplier. Other competencies are required and not all of them are present in our industry today. Almost every business starts simple and becomes complex as it grows and develops. As scale changes, simplicity gives way to layers of structure and controls. When complexity creeps in without control and is not properly measured and managed, it can push against value creation and profitability. In reality, the problem is not extra scale but extra complexity. Additional scale, without additional complexity, will always give lower costs. As a business grows, it wants to provide more services and products, which end up stimulating other overhead drivers, such sales and purchase transactions, manufacturing footprint, accounting systems, organizational structure and managerial habits, to mention a few. But of all the complexity sources, the swelling of the product portfolio and the elevated number of discrete components, can be the largest hindrances to attaining high levels of profitability, growth and customer satisfaction. When the product offering contains just a small amount of variation, the impact of adding new parts is relatively minor. However, as complexity grows in the form of low-volume products and customers, just a few additional part numbers can create a disproportional increase in complexity costs. Without deliberate and systematic actions to simplify the product offering, non-value-added complexity will prevail over time. On the other hand, part number de-proliferation can have a huge positive impact on profitability. Thomas Johnson and Anders Broms wrote about tackling product proliferation and how it can improve profitability in a 1995 article, in the AME Magazine[i], talking about Scania’s approach to dealing with complexity: “Everyone believes that a manufacturer will improve costs and profitability by reducing the number of different parts in its products. And for good reason. With fewer different parts, less effort and resources are required to design, make, and service a product line. Accordingly, activity-based cost management systems routinely use part-number count as a cost driver to estimate how much financial performance will improve by reducing the number of different parts. However, it is not well understood that cost-driver information may capture only a small fraction of the financial improvement that part-number austerity makes possible.” The good news is that product line complexity is easily detectable, such as in too many products and too many customers, or too many part numbers and too many suppliers and transactions. The number of discrete parts or products and number of transactions are two important cost drivers to estimate the financial opportunity of tackling complexity, since they are directly responsible for less apparent issues, such as too many systems, too many reports, too many procedures, and last but not least, too many people creating and maintaining part numbers and SKUs. Not to mention the complexity created by tailored parts that interfere with standard products everyday. But how do you tackle proliferation of part numbers and complexity in the product portfolio? There are three steps to do this: diagnosis, simplification and prevention. First you need to quantify and qualify complexity. Second you need to apply what I call "causative simplification", to reduce complexity. Third, you need to prevent new complexity from slipping into the system, by creating a filter to avoid new parts and products from entering without scrutiny; and you also need to measure product line complexity on an ongoing basis. Prevention can eventually lead you to create a vision for a product line architecture that is more modular and simpler, while extremely aligned with your business model. This approach to simplicity is very effective and long lasting, as seen in companies such as the European truck maker Scania. However, it is not trivial. If your company was not created with such product architecture in mind, you will have to muscle through the simplification work, before attempting to build such a system. Diagnosing Complexity Complexity can be quantified using metrics that are associated with the symptoms of having too many part numbers and SKUs. Shipping performance or shipping late and incomplete, for example, can be an indicator of elevated complexity. Sales performance of items that you normally carry in inventory, in terms of “turns and earns”, can be another good choice. Other metrics relate to the health (and sanity) of your engineering database, such as how many product designs are made for similar applications, but sold to different customers; number of subtle design variations in similar parts; and one of my favorites, the number of engineering change requests (ECRs) that enter the system every day or every month with little or no discussion. You would be surprised by the number of ECRs processed every day by many manufacturing companies, without really understanding the benefits and the impact to the cost of complexity. In many cases, it is useful to estimate the cost of complexity associated with each part number. People need to see and relate to the problem in order to get involved in the solution. And one of the best ways to estimate the cost of complexity is to calculate the impact of product proliferation on the overall cost of goods sold (COGS). This is best done by product line, if the P&L is structured that way, but an overall number also helps visualize the issue. Most companies use a “clean-sheet-based cost model” or design cost to determine the “should cost” for each part or SKU. Every month they take the total variable cost dollars, add the period costs and compare the sum with the total “should cost” (design cost multiplied by the volume in the same period). Even though the “should cost” is close to being a theoretical cost, the difference between the actual and should gives you a good benchmark to measure yourself against. The chart below shows a typical cumulative distribution of sales dollars versus part numbers or SKU’s, compared with a cost of complexity curve, using the method above. As parts-count increase, the complexity cost goes up exponentially. Limiting your portfolio to the high-volume SKUs or the sweet spot of the market is ideal, from a complexity standpoint, but not practical. The question is always how much complexity you can afford to let into the business, to be able to meet the needs of your core customers, and at the same time avoid getting into the “red” zone of complexity cost. In other words, complexity needs to be actively managed. To qualify complexity, we use 80/20 analytics. More specifically, we use the “customer versus product matrix” (CP Matrix) and the quadrant analysis (Quad Analysis). The analytics are extremely important to visualize the problem and the data patterns and to provide a path for the next step, or causative simplification. The figure below exemplifies the different areas in the CP matrix and the different approaches to simplification. Just by looking at the data and by performing the quad analysis, you can reach several conclusions that will lead to improvement. Do less than 20% of the products account for 80% of the sales? Is there a large number of SKU’s with minimal sales, while there is slow and obsolete inventory of such items? How porous or compact is your portfolio? How large is your low-volume offering compared to the high volume or sweet spot? The density or the sparsity in the portfolio, for example, can be very revealing, in terms of frequency of sales and effectiveness of the different product lines. The shape of the data – clustered or patchy and whether you have gradients or receding patterns – can reveal distortions in buying patterns and point to subsegments or regions that have different needs.

Causative Simplification The next step is to start unloading product complexity from your company, using the outcome of your 80/20 analytics to optimize the product portfolio, combined with a disciplined approach to simplify the product line. I call this phase “causative simplification” because it is designed to produce simplification. With the help of 80/20 analytics, you focus first on the business reasons, to outsource, consolidate, price-up or simply eliminate part numbers and SKU’s that are not aligned with your strategy. Low volume products sold primarily to low volume customers should be priced-up accordingly, to reflect the cost of complexity. You should also look how your inventory investment is performing for these low volume items, using the “turns and earns” index (inventory turns multiplied by contribution margin percentage), and adjust your commercial policies and inventory practices accordingly. In summary, you first let the market respond to your simplification measures (outside in) and then you apply a conscientious effort to reduce proliferation from inside out using PLS (product line simplification). Successful product line simplification is customer-centric and capable of identifying products or features that will satisfy application needs and address market pain points, as opposed to just offering a variety of designs to choose from. PLS cannot be driven by manufacturing, engineering, or purchasing in isolation from other areas. It needs to be a collaborative and multidisciplinary effort by different areas of the business with an eye on the customer application. PLS takes time and effort and is not an overnight exercise. There are three major goals in PLS: 1) to reduce parts-count or the number of discrete products within a product portfolio, consistent with your business strategy; 2) to define a clear position on tailored products: who should get them and how they are to be priced and produced; and 3) align manufacturing processes to support the streamlined product offering. PLS and portfolio optimization need to work hand-in-hand. PLS needs to support both parts-count reduction and overall margin improvement work at the same time. It should not be used to eliminate a complete business line or just the low-volume part numbers and by no means it is intended to leave customers without viable options to satisfy their application needs. The initial focus of PLS should only be whether a product or an item will be included in the product offering or not. Leave the make or buy decisions for later. At the beginning of the PLS process, it is a lot more important to decide what to drop and what to include. The decision to include a product is primarily made by the commercial people in support of marketing strategies, market pain points, and end user needs. Focus on high-volume and high-potential products first and compare your portfolio with the high-volume products of the market. Look at how much customization you are providing, and ask yourself whether it is done on the behest of the core or “eighty” customers. Then shift your attention to simplifying the low-volume products. Consider physically separating production of low-volume parts, outsourcing, redesigning certain products, and even dropping marginal products from the portfolio altogether. Creative strategies surface when the task force performing the PLS is truly multidisciplinary and has engaged participants from different areas. When a few core customers require a certain level of customization, for example, manufacturing team members may be able to devise a way to tailor a standard high-volume product at the end of the line (postponed customization). The engineering members may be able to redesign one product to perform the function of two or more products, for example. At the end, sales and marketing members need to consider what else should be pruned or eliminated at the bottom of the CP matrix where the sales density is very low. Preventing New Complexity The third action in reducing complexity is to control the introduction of new items and to create complexity metrics. The control step is central to avoid creating new part numbers that are not in line with the unique value propositions (UVPs) of the business. Any new part or SKU entering the development process needs to go through a two-stage screening process or filter. The first filter is a deal breaker that evaluates the product’s strategic fit. It asks whether the new product or part number aligns with both the needs of the core customers and the business’ UVP. It also tries to understand the contribution margin potential early on. It asks why a new product should be added to the portfolio and what types of evidence prove that it will make money. The second screening stage is conditional (yes, but…), establishing how the company will position the product to meet its profitability targets. It asks several questions: What is the minimum contribution margin acceptable? How are we going to price the new product? If the company is creating a “twenty” product, is it done for an “eighty” customer? How are we going to create availability? Can we produce it using an existing manufacturing line? Should we create availability by outsourcing this product? No product change request or new product charter should be approved without going through this screening process. Companies with effective screening processes have clear product complexity metrics that indicate how many new requests have been approved or rejected and provide a clear vision of the development pipeline. These metrics keep track of the numbers of products, part numbers, and SKUs that are eliminated from the system each month. I’ve talked about complexity metrics above and in previous articles, and there are several options that you can use, depending on your specific situation: sales dollars per SKU, contribution margin dollars per SKU, total number of items entering and leaving the system every month, etc. If the business has a strong distribution component, you may want to use the turns and earns index or CMROI (contribution margin return on investment), to monitor the profitability of your inventory. The point here is to pick only a few metrics that people can relate to and to stay with them overtime. In summary, in order eliminate complexity you have to create simplicity. Simplicity is the ultimate “lean transformation”. 80/20 analytics and PLS are excellent tools to help you create simplicity and increase your profitability. And once you’ve gone through this exercise you will be better prepared to envision new and better product line architectures that have simplicity in their DNA, like Scania did many years ago. [i] H. Thomas Johnson and Anders Broms, Association for Manufacturing Excellence (AME) Magazine - “The Spirit in the Walls: A Pattern for High Performance at Scania,” (May/June 1995). Top-line growth is essential for the long-term viability of any business. There is evidence showing that companies that do not grow over a certain number of economic cycles are destined to go out of business or be assimilated. Growing below the industry or the GDP rates can be a significant drawback for companies and leaders. But you can’t just add revenues at any cost. If growth is not executed in a lean and profitable manner, the additional revenues can bring along extra complexity and more overhead. Low quality growth can increase financial leverage and reduce cash flow, compromising performance and long-term viability. I was recently invited to participate in a discussion panel, at the SelectUSA 2016 Summit in Washington DC, regarding my experience with our company. More than 2,500 participants from 70 countries listened to speeches and experiences from international companies doing business in the US, on how to enter and grow in the largest consumer market in the world. The questions I kept hearing were certainly related to entering the US market but mainly about sustaining profitable growth afterwards. In other words, how to balance the need for growth with the risk of entering an extremely competitive market? A lot of complexity that goes unmeasured and unmanaged in organizations is created when companies embark on revenue growth at any cost. In many cases, when they try to grow their product and customer portfolios in mature, developed markets without a plan to keep complexity at bay. Trying to gain market share in established markets or growing on too many fronts, can create a lot of transactional complexity and increase overhead faster than revenues and profits. In fact, there is the misconception that, when entering a new market, the growth options are limited to taking market share from existing competitors and merging or acquiring an existing business (M&A). I know by experience there is a third option that should be explored, which is most effective and often neglected. 80/20’s approach to quality growth calls for a sharp focus on select few market segments, coupled with portfolio optimization, in order to specialize your offering and maximize growth. When you specialize your value proposition in select niches, using segment-focused business units (often decentralized), market share gain comes naturally and not at a higher cost. M&A initiatives can then be used to gain portfolio momentum, focusing first on new product lines and bolt-on acquisitions and second on growth via new business platforms. In their book The Granularity of Growth[i], the authors from McKinsey & Co. split a company’s growth into three main components they call “growth cylinders”:

In their study of 416 US companies over two economic cycles, they show data that portfolio momentum or market growth explains 46% of the difference in growth performance between large companies. Only 21% came from share gain. Furthermore, regardless of which growth cylinders you choose, the top three success factors are the same ones touted by real estate agent’s everywhere: “location, location and location”. Granularity is king and I was not surprised to hear similar reports from almost every successful investor at the SelectUSA Summit. What they mean is, in order to succeed, you need to be very selective and dive deeper into industry segments and niches (and regions and cities) that you wish to target. You need depth and granularity in your growth strategy when deciding where to focus your efforts to compete and grow.

Picking the most attractive segments to compete is just as (or even more) important than knowing how to compete. There are better market sweet spots or niches, where you can sustain a higher level of organic growth for your specific product or service offering. And to find out where these niches are, just like in the real estate business, you need to do more than understand the market fundamentals, such as the competitive structure, cyclicality and regulations. You need to use primary research coupled with analytics, to find uncommon knowledge such as the average contribution margins practiced in the segment and how operating cash is used, in the form of working capital (receivables, payables and inventory), for example. Once you’ve selected the market and defined the initial composition of your portfolio, there are only three ways to improve momentum. The first one is to reallocate the resources and the efforts from the “twenty” areas (sour spots) to the “eighty” area of the portfolio (sweet spot). The second way is to change the structure of your portfolio by changing commercial policies (pricing, rechanneling) and reducing complexity (consolidating SKUs), for example. The third way to grow momentum is to actually grow your markets, by expanding your portfolio, via bolt-on or product line acquisitions, and to expand into new customer pockets or niches (a new region or a new level of granularity in your segmentation). You can find out how much portfolio momentum you have by looking at top line growth and quality of sales in each segment, determining how your business stacks versus the market and the competition. 80/20 analytics can give you a very good idea about the “fitness level” of your portfolio, focusing on sales efficiency, productivity, revenues and margins. Here’s a list of indicators to look at:

Growing top line in a new region is obviously less risky and more profitable, if you have a track record of successfully entering new markets. Some companies have developed this capability really well, like many in the pharmaceutical industry. But even then, you can’t just assume that you will grow by taking market share from competitors in a market like the US for example, just because you are the leader someplace else. If taking share is your only strategy, you might struggle with margins and sustainable growth. You need more than a single growth option and a higher level of specificity in relation to where you play (location, segment, niche) and how you play. You need to be firing on more cylinders than just market share. The questions above should help you rethink your target segments and adjust your growth plans. Doing your “location” homework and fine-tuning your portfolio to acquire momentum are the best strategies to enter a market like the US. An acquisition helps if it’s done in support of your portfolio customization strategy, but you need to be careful not to add too much complexity from day one, and not to inherit a skewed portfolio. The best lessons from companies that succeeded in entering and growing in mature markets have more to do with outstanding niche selection and portfolio acclimatization and less to do with fighting for market share and doing mergers and acquisitions upfront. [i] The Granularity of Growth (How to Identify the Sources of Growth and Drive Enduring Company Performance) – Patrick Viguerie, Sven Smit, Mehrdad Baghai. Published by John Wiley & Sons, Inc., Hoboken, New Jersey (United States 2008). 80/20 is a business process used to improve operating earnings and to differentiate the company from the competition. It does that by focusing on high growth opportunities and by systematically reducing complexity and variation. The method has four basic and interrelated fronts:

There are different application methods and tools for area of 80/20. As an example, to gain the necessary focus on the select group of customers and products that make up the “sweet spot”, 80/20 utilizes a number of analytical tools, such as customers and products matrix (CP matrix) and quad analysis. These tools belong in the 80/20 toolbox or toolboxes, as different companies have unique tools and call their custom built processes by different names. And there is not a rigid prescription for how to apply them either. Some actually have a hybrid system comprised of 80/20 elements and of other Business Process Improvement (BPI) systems, like Danaher and GE for example. However, almost all common BPIs, including Lean, Six Sigma, Deming Cycle (PDCA), Business Process Reengineering, are built on natural cycles of problem recognition, healing, and improvement, which we can easily relate to, based on similar experiences in our personal lives, when we are faced with a problem of some magnitude. Before solving the problem, we ask questions, gather information and try to understand the nature and complexity of the issue. Then we instinctively prioritize the issues by focusing on the big ones first and putting aside minor problems for a while. Then we explore multiple alternatives, and once there is a viable solution, we look for ways to apply it with the least effort possible. As we solve problems, we learn from the experience and use it to either avoid a similar problem altogether or to solve it faster next time. In either case, we have reached a new level of performance through innovation and moved on to a new baseline. Below is a depiction of Lean and Six Sigma BPI’s steps, stacked against the natural phases of problem recognition, healing, improvement and sustaining. 80/20 is not the same as Lean or Lean Six Sigma. They have both different and symbiotic purposes at the same time. 80/20’s primary objective is to maximize shareholder value while lean’s primary objective is to maximize customer value. They are obviously complementary and interdependent goals, as you cannot achieve one without the other. Shareholder value will not be attained if the customer doesn’t receive value and a company cannot deliver customer value, if shareholders are not investing enough in the business, because it isn’t generating adequate returns. But there are real differences between 80/20 and lean and they reside in two areas: 1) in the way they accomplish their respective objectives and ultimately 2) in the scope or the breadth of the method.

Lean maximizes customer value by minimizing waste and doing more with less while 80/20 maximizes shareholder value by boosting focus on the “sweet spot” and by shifting resources from the “trivial many” to the “vital few”. And when you focus on the “sweet spot” of the business and physically segregate the good from the not so good, you know the true cost of complexity (and waste) and have an extra incentive to do something about it, since the financial metrics are segregated with the business. The second point has to do with the fact that lean is primarily an “inside-out” methodology, while 80/20 is an “outside-in” process. 80/20 starts with the customer and the market. Lean starts with a strategic intent or purpose from within the company and eventually becomes a transformation tool, which impacts the way people think and the way the processes work. Contrary to the popular misconception, lean can be applied to all areas of the organization and not only to manufacturing, in fact the term transformation or lean transformation is commonly used to characterize a way of thinking that goes beyond the shop floor. On the other hand, 80/20 starts with the analytics and the data, showing how the market actually pays for the company’s value proposition and evolves to create new ways of running the business. It starts with a real picture as opposed to management perception. 80/20 adjusts the portfolio to the “sweet spot” of the market, takes out complexity that the customer is not paying for, segments the customer base for accelerated growth and innovates based on market needs and pain points. Lean rarely deals with the business model while 80/20 has no “sacred cows”. But you should not think of 80/20 and lean as good or bad! Or you should not see them as opponents either. In fact, 80/20 has a clear role for lean, when it comes to complexity reduction. Lean is the right method to streamline the manufacturing of the “eighty” products and to optimize the flow of products and services through entire value streams and departments to customers, for example. Lean can also help the company respond to changing customer needs, high quality, low cost, and with very fast throughput times. Lean is a friend of 80/20! And as one of the foremost 80/20 experts used to say: “80/20 is lean on steroids”. In previous writings I’ve talked about the importance of 80/20 data analytics. Here I want to give you an application example and hopefully explain how effective this process can be, when you apply sales analytics in conjunction with 80/20 thinking and tolls in search of profitable growth. Sales analytics tools are used to compile data from different sources, but mainly related to customers and products, transforming the records into useful information and insight that sales leaders use to understand their business and develop improvement actions. Data can come from databanks and pipelines such as CRM (customer relationship management), commercial transaction files, product cost databases and so on. This multiple interfacing capability is called MDS for Multi-Data Source integration. Tools with MDS capability literally mine the data from different archives, cluster it together and enable visualization through dashboards and reports. The level of refinement is growing rapidly and most tools now offer predictive analysis, which looks for leading indicators. At a first glance, these indicators may not look like they are directly connected to sales events, however through further correlational analysis and multiple associations in the data, a connection can be established and the indicator becomes a viable predictor of sales. As an example, the number of visitors to certain websites might be a good predictor of future sales of products related to those specific websites. Marketing organizations are constantly mining data to look for hidden relationships and trends that can provide clues about customer’s wants and sales trends. Perhaps the most widely used sales analytics tool for ecommerce is Google Analytics. It is a service from Google that provides statistics and basic analytical tools for search engine optimization (SEO) and marketing purposes. Geared towards small and medium-sized retail websites, Google Analytics has many features such as data visualization (dashboards and scorecards), motion charts, segmentation analysis, as well as custom reports. In spite of this growing level of sophistication, the vast majority of these tools are multi-purpose, or designed to organize the output in generic ways that are easy to understand and track. Then it’s up to managers to create strategies and extract conclusions from the many dashboards and predictors. And since these are primarily off-the-shelf tools and every business is unique, customization not only costs a lot of money, but customization also puts a cast around the company’s analytical capability. Having said that, almost all basic software products offer interesting tools such as sales dashboards, order pipeline and territorial management, sales planning and productivity metrics. The more advanced products offer additional features like predictive analysis, sales forecasting, customer data segmentation and product profitability analysis, Off-the-shelf analytic tools are great to track the performance of the sales team, but they are not meant to be transformational tools on their own. Managers obtain useful information and clues about productivity issues and market penetration, for example, but they do not provide a roadmap to growth or increased profitability. It’s when you couple these tools with 80/20 analytics and mindset that you can develop a strategic plan to optimize the portfolio, increase profitability and grow. Below I make an attempt to stack these tools from the tactical or day-to-day dimension (level 1) to the most strategic dimension (level 5). The first two levels are what I call “performance” levels and the other three can be considered “transformational” levels. The higher you go, the more impactful and lasting the results will be. Level 3 or 80/20 analytics, allow us to use the data from levels 1 and 2 to execute on the notion that “some customers and products are more equal than others”, and apply a super high focus on a select group of customers within the existing market segment. The customers in this select group are the ones that are likely to pay more for your unique value proposition (UVP), compared to the trivial many, or the “twenty” customers. Tools such as quad analysis help you fine-tune the portfolio by reducing overhead and SKU count, better aligning them with the needs of the “eighty” customers. The misaligned overhead and the low-volume and low-margin products are only creating complexity (read cost) and these are the tools that help you simplify your sales processes and optimize your offering. But once you are happy (for a while) with the optimization and the simplification then comes growth.

Top-line growth is essential for the long-term viability of any business. Segmentation (level 4) is the best and the safest way to generate new revenue streams. You start by looking inside your current markets to find new growth avenues, before you branch out into unknown areas. You should first exhaust adjacent or incremental revenues, near the core business, and then look beyond the core for new markets and selective acquisitions as growth catalysts. Preferably focusing on new product lines that will enhance your UVP to existing and prospective “eighty” customers. Tools such as cluster analysis and marketing mix planning or 5 P’s (price, product, promotion, place and people) help validate the ideas or segmentation hypotheses that might have surfaced through sales analytics tools in level 2. But segmentation is not just about finding niches, but it’s mainly about the way you run the business, redirecting and specializing your sales and marketing organizations and eventually splitting them into new, expert business units. By using segment-focused business units, the expansion costs are kept closely coupled with the unit’s ability to deliver growth and to charge for the value of their UVP. Segmentation will lead to de-commoditization of your business, while empowering your best people to succeed in the refocused business units. But how do you sustain growth and profitability overtime? Innovation (level 5) is how businesses sustain market share and create moats, to defend against competitive attacks. You need to defend your market share and explore incremental opportunities, but you also need to be capable of developing new strategies that can lead to transformational growth. The key to new strategies is innovation, which is the driving force behind adaptability and the skill that yields direct change. Peter Drucker has a simple, elegant definition for innovation: “change that creates a new dimension of performance.” Sales innovation needs to go beyond products. It needs to solve problems for customers and markets. It starts with the analytics and requires an understanding of the problems or pain points faced by the “eighty” customers, within a specific market segment. Using ideation tools and techniques and problem solving methodologies, the pain points are converted into ideas and solutions. Market-segment-focused business units are better prepared than any other type of organization to find the pain points and deliver innovative solutions to known and unknown customer problems. Specialized sales organizations are closer to end users, hence they are best positioned to create a unique view of the market segment, using analytics as a microscope to slice and dice the customer base. This is where the majority of product and service innovation comes from—discovering pain points that customers might not even know they have and figuring out how to solve them in a collaborative way. Companies like General Electric and ITW have used this formula over and over to deliver outstanding performance. In summary, it takes more than sales analytics to grow and sustain profitable revenues. The data needs to be part of a framework that contains other strategic elements, such as those found in the 80/20 Business Process. An off-the-shelf software package will help you manage the effectiveness of your sales force and provide you with ideas, but it will not create a higher level of performance alone. To attain transformational growth, you need to combine sales analytics with a selective mindset and a sharp niche focus, provided by specialized sales teams and business units that are constantly trying to innovate beyond products. This combination represents a virtuous cycle that will create a strong moat and differentiate your company in the market over time. To become effective and successful as business leaders nowadays, we need to move beyond embracing change; we need to enjoy it. Bubbling economies, disrupted markets, complicated regulations, are all too common occurrences in our daily lives. We’re on our own and we better like adventure, or we need to find a different line of work. But on top of an adventurous spirit, we also need more than ever to have our very own personalized toolbox, which we can carry with us as we move from one challenge to the next. And one of the most important tools in our toolbox is the learned ability to pick the small number of resources and initiatives that give us the best results.